Best Innovative Minds 2019

The most important and anticipated software competition at ASSIST Software, Best Innovative Minds, took place on the 6th of December, 2019. This competition is an internal event that is open to all ASSIST employees (except for the ones that are judging the competition). The purpose of the competition is to find new innovative ideas and solutions that can help the company with its challenges or give a helping hand to society.

Participants can form teams of up to three people, or choose to participate on their own. For this year’s edition, six students from the Faculty of Electrical Engineering and Computer Science at the University of Suceava (USV) were selected, giving them the chance to experience working on a real project, and learning from professionals.

The competition is split into two phases:

- Selection → ideas that could ultimately get to a prototype phase;

- Implementation → making the prototype itself;

Cash prizes totaling over 2,800 euros were awarded to all of the winners and the three winning students also received paid internships at ASSIST.

This year's edition followed the same rules as last year, with the exception of the six students that joined the competition.

In the first stage of the contest, more than 35 amazing ideas were submitted and out of those ideas, the jury selected the six best ideas.

In the final stage of the contest, the selected ideas were implemented as real prototypes and presented in front of the jury, which was formed by professors from the Computer Science Faculty at USV and also representatives from ASSIST Software.

As expected, Best Innovative Minds 2019 was a total success. The jury was astonished by the finalists’ innovative ideas and was impressed by the functional presentations of software and hardware projects with applicability in multiple fields such as health, green energy, forestry, security, and software development, using the latest technologies.

Our colleagues and the participating students worked in teams, learned from each other, and used their imagination, talent, and innovativeness, to create prototypes of their interesting and unique projects meant to bring impact on everyday problems facing the company and the community.

Six teams participated in the final and the winners were as follows.

The winners:

⦁ 1st place - 1.000€

Machine Learning powered Threat Detector project team: Paul Beresca, Sebastian Hatnean, and Sergiu Ilciuc

⦁ 2nd place - 750€

Green Roof project team: Tudor Moldovan, Iulian Tudurean, and Lavric Iulian

⦁ 3rd place - 500€

Autisma project team: Mihaela Chistol, Cătălin Zambalic, and Andrei Barba

⦁ The Popularity prize (chosen by ASSIST Software employees) – 250€

Wood BEACON project team: Ionut Mindrescu, Andronic Tudor și Paul Verciuc

The student winners:

The students that ranked in the first three places were awarded cash prizes and a paid internship.

- 1st place - 150€

Automation visual QA tool project member: Bianca Bordianu

- 2nd place - 100€

Autisma project member: Radu Flocea

- 3rd place - 75€

Horizon App project member: Cristian Istrati

It was a close competition and the jury confessed it was quite difficult to decide on the three winning teams.

All of the projects were brilliant and deserve to be shared. That’s why we will briefly introduce the projects that succeeded in reaching the final stage of the Best Innovative Minds 2019 contest.

→ Proposed by Paul Beresca, Sebastian Hatnean, and Sergiu Ilciuc

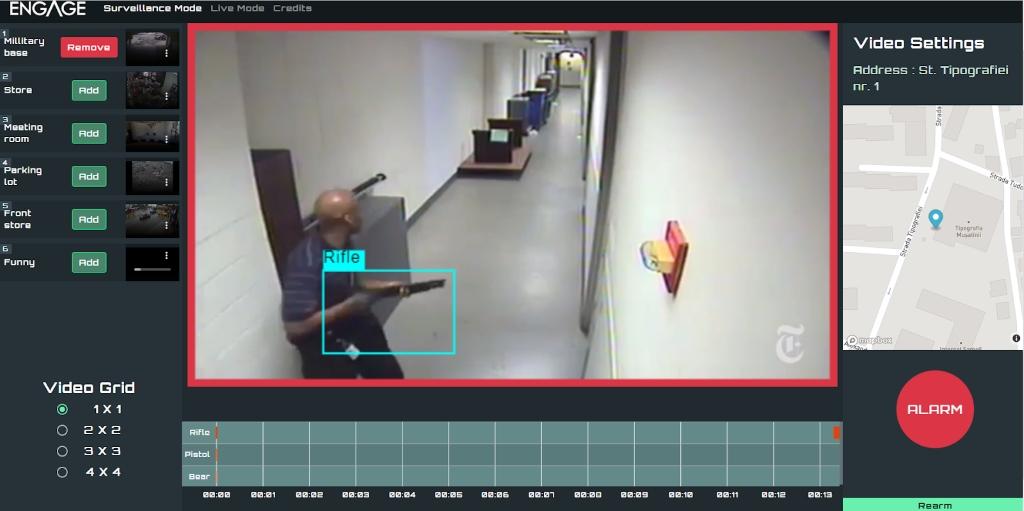

ENGAGE is a machine learning-powered threat detector that aims to identify public threats in real-time by searching through live video feeds (outdoor or indoor surveillance camera images) for possible threats to human life (people with weapons or wild animals).

The application has two core features:

- The development of an object detection model based on COCO-SSD.

- The identification of multiple target objects in a single image or a video, inside a web application.

The target objects for ENGAGE are:

- Pistols

- Rifles

- Bears

ENGAGE uses Tensorflow with Python for model training, which allows the application to detect multiple items of the same class or different classes inside a single video frame. On each frame, the application makes a prediction of the target objects found in the frame, along with a bounding box to show the user the location of the object and also the confidence level for that prediction measured in percentages.

On the picture below on the right is a training image with a bounding box given and on the left is the result of the model findings on the same training image after it was trained for 38817 steps.

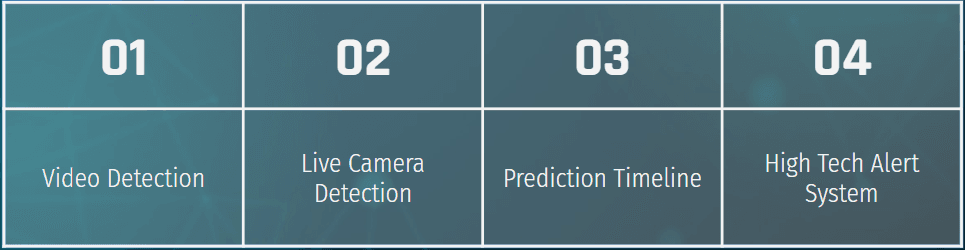

The ENGAGE application includes the following features:

Users have the ability to add any videos or surveillance cameras in the application for object detection. In the image below, the model has identified one of the specified classes and the application has added the incident to the timeline below the video and has also activated the Alert button.

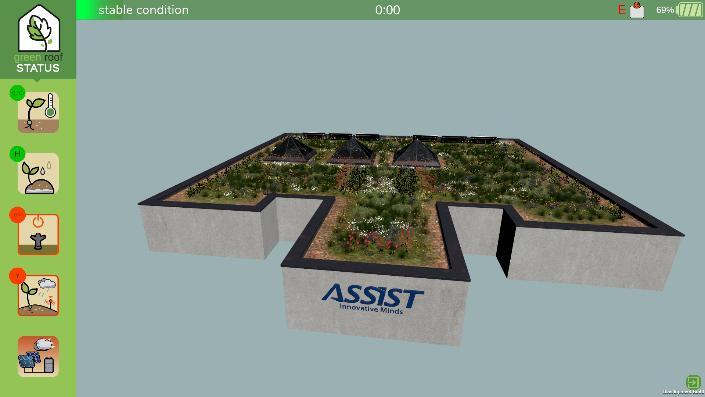

→ Proposed by Moldovan Tudor, Iulian Tudurean and Iulian Lavric

The Green Roof project is, in a nutshell, exactly that: an endeavor that aims to showcase the many benefits of adding such a green extension to a building, providing our company’s headquarters as a most vivid example.

The prototype combines three main areas of craftsmanship and innovation, namely: the physical assembly of hardware components and their intricate functionality, illustrating the scalability and practicality of the system, the “powerhouse” in charge of collecting and interpreting data from said components, taking full advantage of the powerful Unity platform and, last but not least, an intuitive and efficient app, with a fresh and interactive design, providing real-time information to the user, empowering them to be fully aware and in control of their green roof ecosystem.

Combined, in the form of an intelligent, semi-autonomous, integrated “green” solution, all of these features were engineered with only one goal in mind: taking a step in aiding our planet, faced with ever-increasing ecological damage and alarming climate change, one baby “green roof” at a time.

→ Proposed by Mihaela Chistol, Cătălin Zambalic, and Andrei Barba

“The measure of a civilization is how it treats its weakest members” - Mahatma Gandhi

1 out of 59 children is diagnosed with a disease from the autism spectrum. All people affected by such a disorder show a lack of verbal and non-verbal communication as well as social interaction.

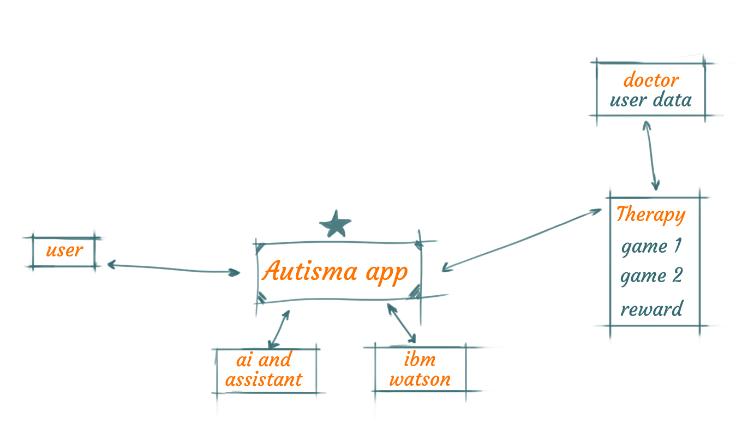

Autisma is a digital assistant embedded into a game designed to improve cognitive, communication and memory skills for children aged 5 to 12 that are affected by such disorders.

The app was developed based on the well-known therapy methods ABA (Applied Behavioral Analysis) and Logopedia (a digital speech therapy tool), researched in collaboration with therapists Andreea Panait and Alexandrina Lucanu from Help Autism Suceava.

These were the technical goals of the project:

- developing an app that changes the content dynamically, based on user progress;

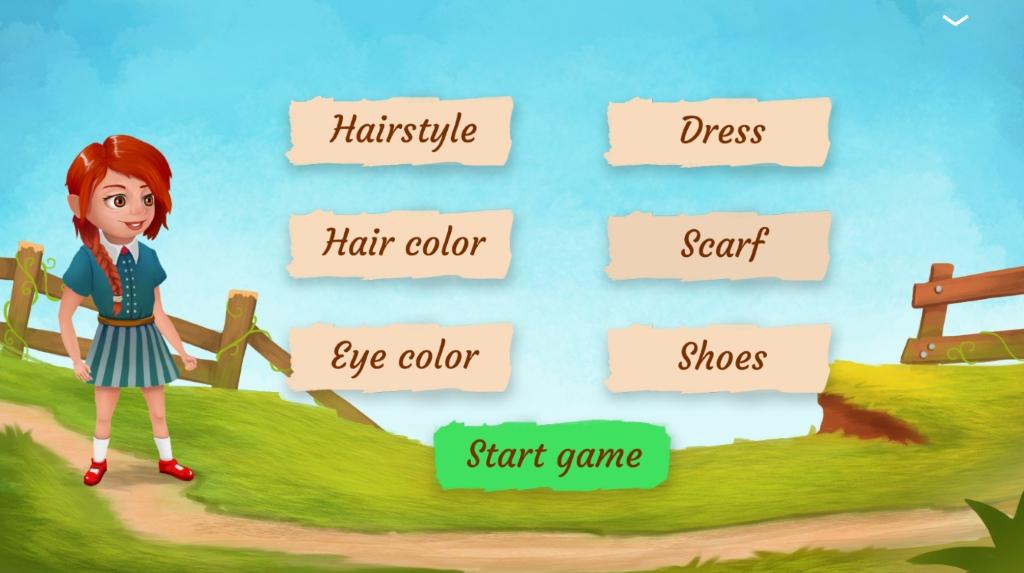

- delivering a highly customizable user experience;

- creating a multi-user app that also provides doctors with a set of data about each user, that can be used to tailor better therapy sessions;

- building a modular app that can be improved upon with ease.

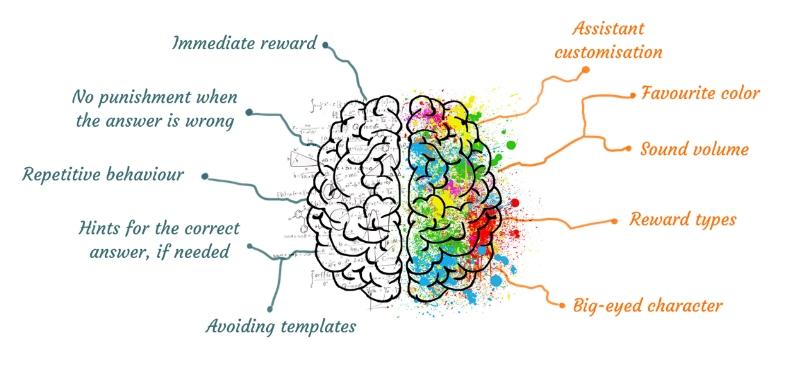

The features of the Assistant module are as follows:

- dynamic generation of game objects based on user progress;

- handling of teaching objects;

- animations that respond to player input;

- an assistant that says the name of the object once it’s correctly guessed.

Figure 1

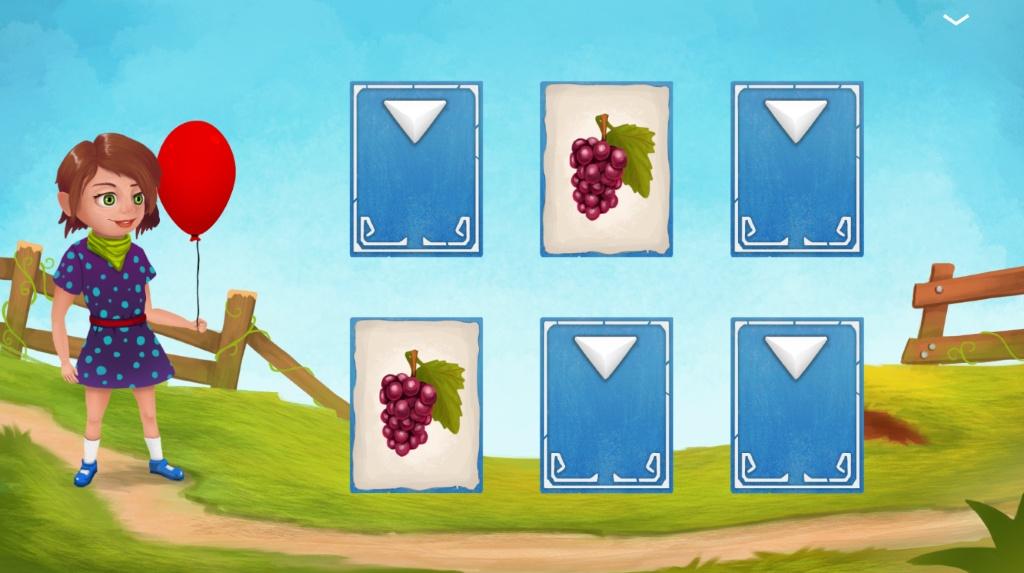

The first game (see figure 2) is a matching game that aims to help users improve their memory and awareness skills.

Figure 2

The second game (see figure 3) aims to help users improve their communication and social skills, improve their pronunciation, and to learn new words.

Autisma is designed to be a fun repetition-based app that aims to improve the quality of day-to-day life for children affected by disorders in the autism spectrum. Some advantages of the app include the following:

- cheaper than traditional therapy sessions;

- designed both for home/individual use and for help centers;

- easy to export for multiple platforms.

Figure 3

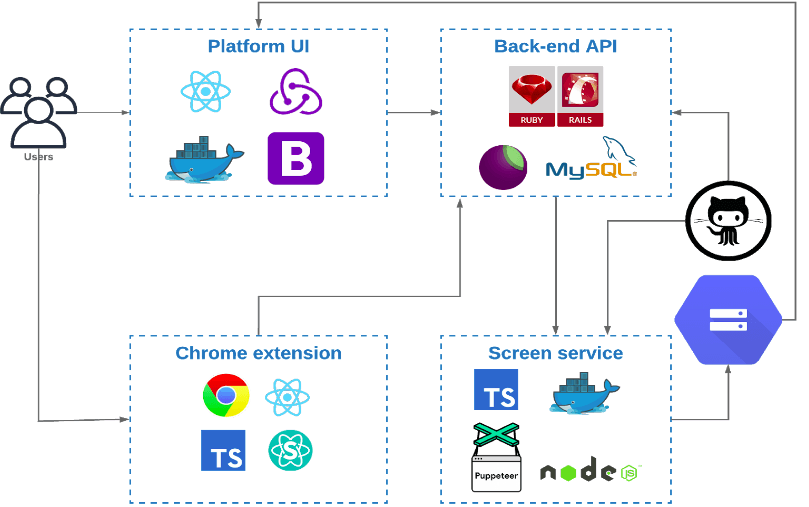

→ Proposed by Cajvan Nicoleta, Cioata Petru, and Andrei Cioban

Time is money. Do you ever wonder why you hear this so often? Because it is well-known that time is the most important resource, no matter what industry you work in and once time has passed, it’s gone and you can’t get it back.

Concerning technology and product development, the QA process has a really big impact on delivery time, so the Automation visual QA tool has been developed to help reduce time spent on regression testing and to avoid including bugs in production.

Even though the project is a QA tool, the targeted users are programmers. They are notified about possible bugs (visual differences between the current branch and the master production) on Pull request or Push events. This way, they can fix the UI bugs before their code goes to the master for the regression testing process. As a result, the tester can focus more on critical issues than on obvious UI bugs.

To develop this project, we used the following technologies:

- Back-end API: developed in Ruby and Ruby on Rails, MySQL;

- Platform UI: developed in React, Docker, and Bootstrap;

- Chrome extension: developed in React and TypeScript;

- Screen service: developed in NodeJS, TypeScript, Docker, and Puppeteer;

- Github webhooks.

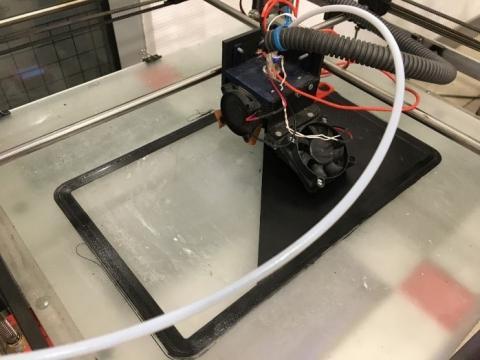

→ Proposed by Mindrescu Ionut Sebastian, Paul Verciuc, and Tudor Andronic

Wood Beacon is a device that aims to detect illegal deforestation. Using machine learning and a high sensitivity microphone, we analyze in real-time suspicious sounds from the forest like those from chainsaws, axes, engines, etc. When the device detects one of these sounds, it transmits an alert to a website with the exact location of the sound’s source.

For this project, we used a Raspberry Pi OS with LoRa/GPS HAT (based on the SX1276/SX1278 transceiver). We used LoraWan with The Things Network to send the alerts to the nearest Lora Gateway. The machine learning script is built using Python and TensorFlow, and the website uses Google API to draw the parcels of the forest and to show the exact location of the sound’s source.

We also used a 3D printer to create a special case for our device and we attached a solar panel (3W) to increase the autonomy of the power bank (20.000 mAh).

→ Proposed by Traciu Andrei

Product High-Level Overview

Horizon is a mobile application specially designed for blind people. Its main purpose is to assist them and make their interactions with the world much simpler. By using a phone, this project wants to give blind people the power of visualization.

The main features of the application are:

- physical interactions with elements like food, money and general household objects;

- navigation from one point to another via GoogleMaps;

- real-time video rendering and providing feedback about the objects that the camera detects.

Usage:

A blind user will take out their phone, connect a pair of headphones to it and point the phone camera towards their surroundings in such a manner so that the application can process every frame and provide feedback.

The feedback system will consist of three sections:

- haptic feedback;

- default sound alarms;

- text-to-speech;

This type of functionality is achieved by combining three core elements:

Real-time video processing will be used to take frames from the camera data flow and send them to the knowledge platform.

The knowledge platform will use a machine learning algorithm that will be trained to detect different objects: from an ordinary apple to a full pedestrian crossing with traffic lights.

Real-time feedback will be provided to the user after the objects from the frames are detected so that the user can take specific actions.

Technical Approach:

- The data input

The application will be done on iOS using Xcode and Swift as the programming language. Using the AVFoundation API from Apple, the app will be able to access the camera video buffer, which will provide image samples.

- Machine learning algorithm

Different tools and platforms will be used to achieve the best results for detecting objects from the images. The image sets will be created manually in the first stage and after that, the camera data will be used.

Tools: Create ML, Turi create, Anaconda navigator, Jupyter notebooks and TensorFlow

- Feedback system

After the data is received from the algorithm, it will be presented to the user in a haptic or audio way. The user will have the ability to configure specific actions.

The sound system will also be able to tell the user what objects are in front of them by using the text-to-speech API from Apple.

We would like to congratulate all of our colleagues and all of the students that dared to think outside the box, to leave their comfort zones and to transform their innovative ideas into real projects.

We would also like to thank the representatives of the Faculty of Electrical Engineering and Computer Science from the University of Suceava for supporting this contest every year and for taking part in the selection process to find the most innovative projects!